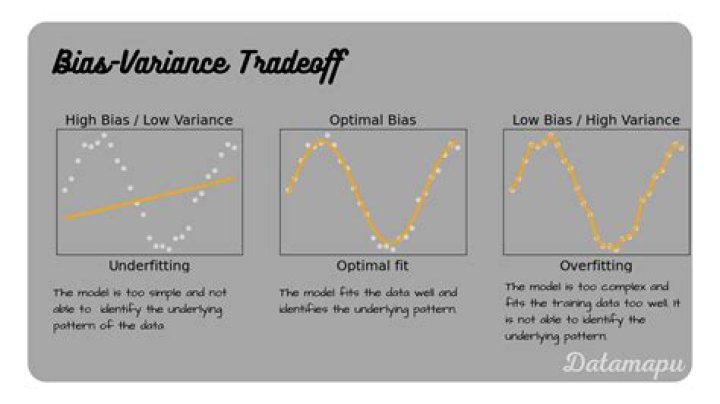

What is overfitting high variance?

A model is overfit if performance on the training data, used to fit the model, is substantially better than performance on a test set, held out from the model training process. When a model overfits the training data, it is said to have high variance.

Is overfitting same as high variance?

Models with low bias (which can learn from the training data well) often have high variance (and therefore an inability to generalize to new data), and this phenomenon is referred to as “overfitting”. By definition, therefore, high model variance despite low model bias is referred to as overfitting.

What does it mean by high variance?

Variance measures how far a set of data is spread out. A small variance indicates that the data points tend to be very close to the mean, and to each other. A high variance indicates that the data points are very spread out from the mean, and from one another.

What does high variance mean Machine Learning?

Variance, in the context of Machine Learning, is a type of error that occurs due to a model’s sensitivity to small fluctuations in the training set. High variance would cause an algorithm to model the noise in the training set. This is most commonly referred to as overfitting.

What does it mean to Underfit your data model variance?

Overfitting In Machine Learning. Like Above graph shows that the model fitted in the data according to the training data point so it contains high accuracy on training data but when we pass the testing data into it gives us low accuracy so the model in Overfitting. Bais Variance using Bullseye Diagram.

What is high variance and high bias?

High Bias – High Variance: Predictions are inconsistent and inaccurate on average. Low Bias – Low Variance: It is an ideal model. Low Bias – High Variance (Overfitting): Predictions are inconsistent and accurate on average. This can happen when the model uses a large number of parameters.

Why Overfitting has high variance low bias?

Underfitting happens when a model unable to capture the underlying pattern of the data. overfitting happens when our model captures the noise along with the underlying pattern in data. It happens when we train our model a lot over noisy datasets. These models have low bias and high variance.

What is the meaning of overfitting in machine learning?

Overfitting in Machine Learning Overfitting happens when a model learns the detail and noise in the training data to the extent that it negatively impacts the performance of the model on new data. This means that the noise or random fluctuations in the training data is picked up and learned as concepts by the model.

Is high bias overfitting?

A model that exhibits small variance and high bias will underfit the target, while a model with high variance and little bias will overfit the target. A model with high variance may represent the data set accurately but could lead to overfitting to noisy or otherwise unrepresentative training data.

What is the difference between overfit and Underfit?

Overfitting is a modeling error which occurs when a function is too closely fit to a limited set of data points. Underfitting refers to a model that can neither model the training data nor generalize to new data.

What is overfitting and underfitting?

Overfitting occurs when excellent performance is seen in training data, but poor performance is seen in test data. Underfitting occurs when the model is too simple in which poor performance is seen in both training and test data.

What is overfitting in ML?

Overfitting is the result of an ML model placing importance on relatively unimportant information in the training data. When an ML model has been overfit, it can’t make accurate predictions about new data because it can’t distinguish extraneous (noisey) data from essential data that forms a pattern.

What is overfitting a model?

Overfitting. In statistics, overfitting is “the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit additional data or predict future observations reliably”. An overfitted model is a statistical model that contains more parameters than can be justified by the data.