What is cluster computing in big data?

Cluster computing is network based distributed environment that can be a solution for fast processing support for huge sized jobs. A middle-ware is typically required in cluster computing. In this proposal a middle-ware is proposed for handling the existing processing problems in distributed environments.

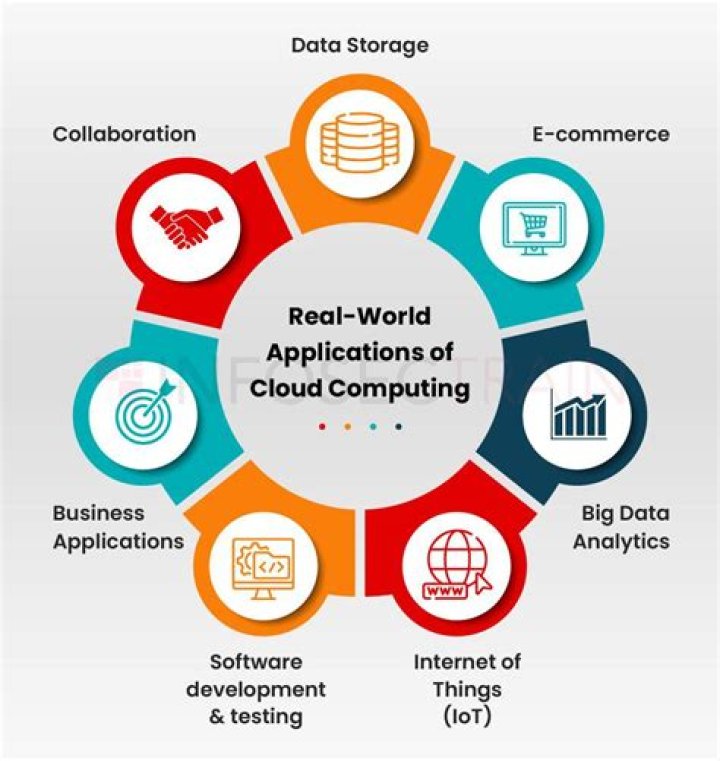

What is large-scale cloud computing?

Large-scale cloud computing systems have served as the fundamental supporting platform for big data, Internet of Things, and artificial intelligence applications for the past decade. A Markov-based model is used to examine the system’s potential failures, assess their severities, and suggest quick recoveries.

What are the types of cluster computing?

Computer clusters can generally be categorized as three types:

- Highly available or fail-over.

- Load balancing.

- High performance computing.

What is cluster computing system?

Cluster computing is a collection of tightly or loosely connected computers that work together so that they act as a single entity. The connected computers execute operations all together thus creating the idea of a single system. The clusters are generally connected through fast local area networks (LANs)

What is cluster computing example?

Cluster computing is the process of sharing the computation tasks among multiple computers and those computers or machines form the cluster. Some of the popular implementations of cluster computing are Google search engine, Earthquake Simulation, Petroleum Reservoir Simulation, and Weather Forecasting system.

What is the role of cluster computing in big data?

These clusters provide both the storage capacity for large data sets, and the computing power to organize the data, to analyze it, and to respond to queries about the data from remote users.

Which platform is large scale computing?

2 Answers. Apache Hadoop and MapReduce are the platforms use for large scale cloud computing.

Which platform is used in large scale in cloud?

11) What are the platforms used for large scale cloud computing? Apache Hadoop and MapReduce are the platforms use for large scale cloud computing.

Why do we use cluster computing?

A computer cluster can provide faster processing speed, larger storage capacity, better data integrity, greater reliability and wider availability of resources. Computer clusters are usually dedicated to specific functions, such as load balancing, high availability, high performance or large-scale processing.

Which platform is used in large scale in cloud computing Mcq?

AWS is one of the most successful cloud-based businesses, which is a type of infrastructure as a service and lets you rent virtual computers on its own infrastructure.

What is a clustered computer?

Clusters are typically used for High Availability for greater reliability or High Performance Computing to provide greater computational power than a single computer can provide. Cluster is a widely used term meaning independent computers combined into a unified system through software and networking.

How do I run clustering software?

You can run clustering software either directly on the bare-metal hardware, as with on-premises clusters, or in virtualized environments, as with cloud environments. Orchestrating multiple nodes in a cluster by hand is time consuming and error prone.

What are the different types of cluster computing workloads?

There are two main types of cluster computing workloads: High-performance computing (HPC) — A type of computing that uses many worker nodes, tightly coupled, and executing concurrently to accomplish a task. These machines typically need low network latency to communicate effectively.

How long does it take to build a cluster?

Launching a production-quality cluster in the cloud takes only a few minutes, from a small 10-node cluster with hundreds of available cores, to large-scale clusters with a hundred thousand or more cores. In contrast, building new clusters on-premises can take months to be ready for operation.