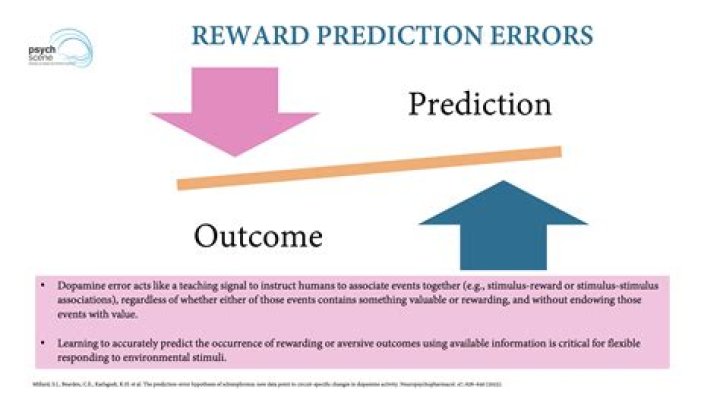

What is a reward prediction error?

Reward prediction errors consist of the differences between received and predicted rewards. They are crucial for basic forms of learning about rewards and make us strive for more rewards—an evolutionary beneficial trait.

What is prediction error theory?

A deep success story of modern neuroscience is the theory that dopamine neurons signal a prediction error, the error between what reward you expected and what you got. The theory bridges data from the scale of human behaviour down to the level of single neurons.

Is dopamine a reward signal?

Dopamine responses transfer during learning from primary rewards to reward-predicting stimuli. The dopamine reward signal is supplemented by activity in neurons in striatum, frontal cortex, and amygdala, which process specific reward information but do not emit a global reward prediction error signal.

What is prediction error in reinforcement learning?

In TD, prediction error becomes the difference between the expected value of all future reward at a certain point in time (deemed the state at time t) and the expected value of all future reward at the succeeding state (at time t + 1).

How do you find the prediction error?

The equations of calculation of percentage prediction error ( percentage prediction error = measured value – predicted value measured value × 100 or percentage prediction error = predicted value – measured value measured value × 100 ) and similar equations have been widely used.

How does prediction error lead to learning?

Firstly, prediction-error signaling regulates the amount of learning that can occur on any single cue-reward pairing. That is, the magnitude of the difference between the expected and experienced reward will determine how much learning can accrue to the cue in subsequent trials.

What is the dopamine hypothesis of reward?

An unexpected reward elicits a strong positive dopamine signal, which declines with repeated presentation and learning, such that the presentation of a predicted reward eventually no longer elicits dopamine-mediated neuronal responses.

How will you measure prediction error in regression and classification?

There are many ways to estimate the skill of a regression predictive model, but perhaps the most common is to calculate the root mean squared error, abbreviated by the acronym RMSE. A benefit of RMSE is that the units of the error score are in the same units as the predicted value.

What are TD methods?

It is a supervised learning process in which the training signal for a prediction is a future prediction. TD algorithms are often used in reinforcement learning to predict a measure of the total amount of reward expected over the future, but they can be used to predict other quantities as well.

Is TD learning model based?

Temporal difference (TD) learning refers to a class of model-free reinforcement learning methods which learn by bootstrapping from the current estimate of the value function.

Is it possible to have an error in predicting reward?

And indeed errors in predicting reward are but a sub-set of the possible errors in predictions about the world that could exist in the brain (a story for next time). But that dopamine neurons encode an error in predicting reward seems a well-established part of what they do.

Is there a link between dopamine and reward prediction error?

This correspondence between dopamine and “reward prediction error” has its roots in the branch of AI called reinforcement learning (well, technically, it’s a branch of machine learning, but as everything is now labelled AI, including a FitBit which I’m pretty sure is just an accelerometer with a strap, then AI it is).

How does the brain learn from errors in its predictions?

So the brain just needs a signal about whether or not a reward occurred, and use that to adjust connections. No complicated signal about the error in predictions is needed. So a brain could learn from reinforcement with or without an explicit signal for errors in predicting that reinforcement.

What is the prediction error signal in machine learning?

There is no error signal. The agent is learning from feedback about the world, and can use its learning to make decisions, but has no prediction error signal. Sure, we could construct one — by computing the difference between the probability distributions before and after the coin arrived — but we don’t need one. The error signal is implicit.